on the dangers and stupidity of generative ai

theft, coups, neocolonialism, and the nepo baby to end all nepo babies

why, you might ask me, should you care about ai scraping the internet for its generative material?

simple: because it is stealing everything under the sun in an attempt to bypass real human creativity and labor, and perhaps more importantly, the rightful satisfaction and compensation that comes from the just pursuit of that labor.

but just how much is it stealing, joelle?

a lot.

Upwards of 170,000 books, the majority published in the past 20 years, are in LLaMA’s training data. In addition to work by Silverman, Kadrey, and Golden, nonfiction by Michael Pollan, Rebecca Solnit, and Jon Krakauer is being used, as are thrillers by James Patterson and Stephen King and other fiction by George Saunders, Zadie Smith, and Junot Díaz.

Of the 170,000 titles, roughly one-third are fiction, two-thirds nonfiction. They’re from big and small publishers. To name a few examples, more than 30,000 titles are from Penguin Random House and its imprints, 14,000 from HarperCollins, 7,000 from Macmillan, 1,800 from Oxford University Press, and 600 from Verso. The collection includes fiction and nonfiction by Elena Ferrante and Rachel Cusk. It contains at least nine books by Haruki Murakami, five by Jennifer Egan, seven by Jonathan Franzen, nine by bell hooks, five by David Grann, and 33 by Margaret Atwood. Also of note: 102 pulp novels by L. Ron Hubbard, 90 books by the Young Earth creationist pastor John F. MacArthur, and multiple works of aliens-built-the-pyramids pseudo-history by Erich von Däniken.

from revealed: the authors whose pirated books are powering generative ai by alex reisner.

is any of this legal? maybe; maybe not.

there’s a new lawsuit being brought forth against meta (may they burn to the fucking ground) by authors sarah silverman, richard kadrey, and christopher golden that alleges that meta’s large language model is using their books - also known as copyrighted material - to train its model to better imitate human speech. legality aside, the ethics of large scale language models (and the arms race to develop generative AI in particular) are, to my mind at least, acceptable only to those who refuse to engage with the world and its people and other nonhuman inhabitants in any serious terms whatsoever. astral codex ten wrote an excellent analogy comparing the responses by meta, sam altman, and other members of evil tech incorporated that claim to be addressing the mounting concerns over AI and their plans to mitigate against future disaster, once the imminence of that disaster has come close enough:

Imagine ExxonMobil releases a statement on climate change. It’s a great statement! They talk about how preventing climate change is their core value. They say that they’ve talked to all the world’s top environmental activists at length, listened to what they had to say, and plan to follow exactly the path they recommend. So (they promise) in the future, when climate change starts to be a real threat, they’ll do everything environmentalists want, in the most careful and responsible way possible. They even put in firm commitments that people can hold them to.

An environmentalist, reading this statement, might have thoughts like:

Wow, this is so nice, they didn’t have to do this.

I feel really heard right now!

They clearly did their homework, talked to leading environmentalists, and absorbed a lot of what they had to say. What a nice gesture!

And they used all the right phrases and hit all the right beats!

The commitments seem well thought out, and make this extra trustworthy.

But what’s this part about “in the future, when climate change starts to be a real threat”?

Is there really a single, easily-noticed point where climate change “becomes a threat”?

If so, are we sure that point is still in the future?

Even if it is, shouldn’t we start being careful now?

Are they just going to keep doing normal oil company stuff until that point?

Do they feel bad about having done normal oil company stuff for decades? They don’t seem to be saying anything about that.

What possible world-model leads to not feeling bad about doing normal oil company stuff in the past, not planning to stop doing normal oil company stuff in the present, but also planning to do an amazing job getting everything right at some indefinite point in the future?

Are they maybe just lying?

Even if they’re trying to be honest, will their bottom line bias them towards waiting for some final apocalyptic proof that “now climate change is a crisis”, of a sort that will never happen, so they don’t have to stop pumping oil?

from astral codex ten’s, open ai’s “planning for agi and beyond”

are you beginning to understand the scope of the problem?

in some lighter assessments of ai and the general shenanigans of the great billionaire grifters we’re all forced to pretend aren’t some of the stupidest, least soulful, most creatively devoid shells of people who have ever masqueraded around on the planet, pretending to have any legitimate claims to real intelligence, you could turn to “i cannot believe the shit that morons are getting up to with chatgpt” by max read, or “using chatgpt and other ai writing tools makes you unhireable. here's why.” by doc burford. this one, to me, gets to the deeper crux of the issue within the ai debate, beyond just whether or not stealing artists’ work is ethical (it’s not!) or legal (it shouldn’t be!): the task of automating creative and generative output to machines is a stupid person’s idea of a smart idea. why the fuck would i want to read a novel that comes from an amalgamation of stolen text, let alone one that is automated via a system that has no understanding of the human condition? why would anyone? what the fuck is the point of anything if that’s where we’ve decided to invest our time and money? which leads me to my next point: that none of these technologies, no matter how laughably stupid they seem, are coming into existence within a vacuum.

the development of these open language models and other AGI plans cost well within the billions of dollars. billions, with a b. i understand we live in hell; believe me, i am well acquainted with that reality. but the fact that some of the richest people in the world are willing to invest and pour billions of dollars into trying to gimmick a machine into vomiting up feasibly human-sounding sentences, instead of using that money for quite literally anything else in the world, is like watching the episode of bojack horseman where he’s competing on mr. peanutbutter’s new gameshow, ‘hollywoo stars and celebrities: what do they know? do they know things? let’s find out!’ against a voiced-by-daniel-radcliffe daniel radcliffe.

bojack has five hundred million jillion dollars in prize money, which is going to be donated to charity, when he decides to enter into the double-or-nothing round, where he can either correctly guess the gameshow’s final answer or — and this is one of my favorite commentaries on hollywoo(d) and wealth from the show’s entire six and a half season run — have it literally burned to ash in a roaring fire that opens up on stage. the final question - to which bojack has to answer correctly, in order to give his millions of dollars to charity or watch it go up in flames - asks bojack which child star played the titular role in the harry potter franchise, as said child star stands next to him. but bojack is upset because actually-voiced-by-daniel-radcliffe daniel radcliffe hurt his feelings, and so he answers, in a moment of tension and subterfuge gleefully presided over by a not-actually-dead j.d. salinger, the infamous final words: “i want to say … elijah wood?”

and into the pit of flames the billion dollar bag of cash goes.

that is how petty and wasteful and stupid these AGI development programs are, and they don’t even have the benefit of being funny.

but of course the tech industry has been full of scammers and inflated egos posing as wunderkinds since its inception — surely naming its hub silicon valley has created some causation in only the most plastic, vile, exploitative humans’ rises to the tops — and of course the same tech millionaires that are injecting themselves with their teenage children’s blood to stay young forever and planning to build compounds (complete with shock-collared guards) to protect themselves from the masses after the next inevitable societal collapse see no irony in the fact that they themselves will go to such lengths for longer and more lucrative lives, while their very actions and waste help to condemn the rest of the planet to become a pile of smoldering ash through their rampantly irresponsible, ecologically destructive, and just not even fucking clever business ventures.

and this is not hyperbole. the billions poured into AGI development — just like the billions poured into NFTs, and cryptocurrency, and lobbying against monopoly regulation, and intentionally designing programs to produce as much sway on the developing human brain as possible — these dollars are not divorced from the rest of the world. they do not exist solely within the bubble of banking and business and tech feudalism: they are, hypothetically, the same dollars (or digital signifiers of dollars) that could be used to buy you or me groceries, or pay off someone’s student loan debt. these are not just dollars that are being deliberately held and invested into some of not only the most disastrous technology that could possibly be helmed by some of the world’s most callous and powerful people; they are also dollars that are being deliberately withheld and stockpiled against mitigating any of the tsunami waves of destruction that mining for the minerals necessary to create these computers or reinventing feudalism or pushing consumerism and conspicuous consumption and calling for coups in bolivia like musk openly did on twitter (before buying it in one of the worst botched deals of the last five years) causes. these are people whose ecological destruction is beyond anything you or i could begin to fathom; and they are continuing these paths and streams of profits knowingly. the blood on their hands will be definitively within the millions, if not billions; any just and reasonable assessment of both their conscientious participation in and acceleration of these industries (and the necessary political and militarized systems that allow both their positions as feudal overlords and the systems themselves to exist) — any assessment of their position within the larger schemes of human life and enterprise has to end either in burying one’s head in the sand or in admitting and reconciling fully with the fact that the billionaire technocratic class is committing ecological destruction, mass cultural displacement, and intentional poverty on levels previously only attained by the heads of empires and, within the last hundred or so years, war criminals and oil barons.

make no mistake: in the scales of recorded history, the concentration of wealth and willful misusage of it for no ends other than their own gain will place these men and women alongside the most evil beings to have ever lived.

and for what?

well, for one, for a fake writing program that doesn’t actually know how to fucking write.

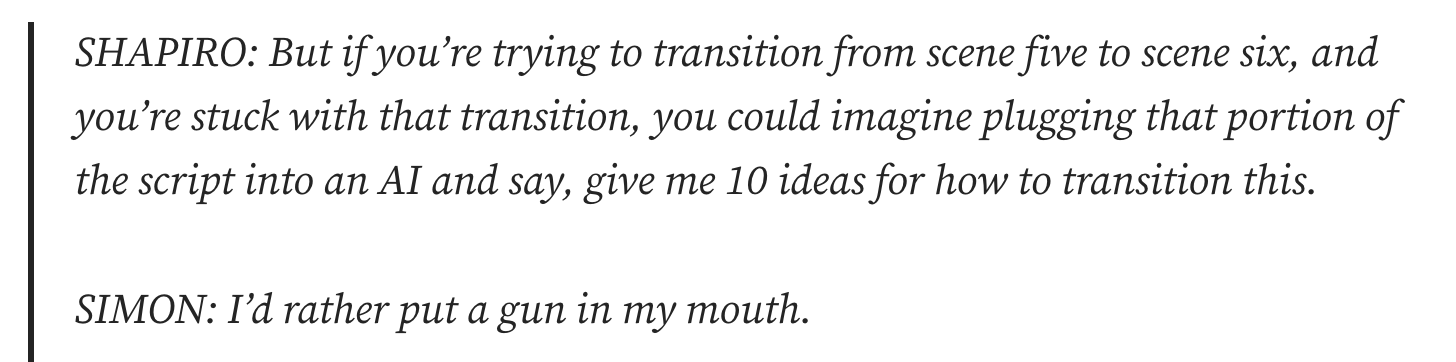

from doc burford:

Right now, we have AI drawing generators that still don’t understand human anatomy well enough to draw hands — that’s because when it draws, it’s just training itself based on other 2d images it sees.

As you may be aware, two dimensional drawings are generally of three dimensional objects.

So, when you see a hand rendered in 2D, you think of it as a 3D object. You know how a hand ought to look, how the fingers bend and flex, how the skin looks, how many fingers you expect to see, and so on (rarely more than five, occasionally less than five, and you likely know the average lengths of each finger — the middle finger is the longest, the thumb is offset, the pinky is the smallest, and so on).

The AI does not know that. Its entire world — and training data — is two dimensional. … But it can’t give you an actual hand, because it doesn’t have any concept of a real hand and how the hand should work. [emphasis mine]

aren’t we so happy to be living in the day and age we’re living in? aren’t we so happy that elon musk promised to solve world hunger if the UN would only “provide a plan” to do so, which they then did, only for him to turn around and spend forty-four billion dollars on twitter instead of the six billion that the UN’s plan called for? aren’t we thrilled to live in an age of such stupidity and widespread death of critical thinking that the greediest, least original thinkers of our time can come into family apartheid mine money and then convince entire squadrons of reddit goons that they’re actually special geniuses?

remember when jeb bush ran for the republican presidential nomination against trump in 2015, a lifetime ago to my own weary senses, and posted this instantly classic tweet before one of his debates?

this is what the entirety of elon musk’s grift feels like to me: a man born into a dynasty of such immense power and wealth, and borne from so much political and economic cruelty, that we may not even be able to see the full scope of it until we are decades in the future — a man with everything a person could possibly want, except self respect or their father’s approval, who then spends their entire life “trolling” online (and again, i cannot stress this enough, advocating for and helping push through a political coup in bolivia, the country with the largest lithium reserves in the entire world, a chemical element which is crucial for the development of electric cars).

but of course a man who has produced next to nothing for humanity (he did not actually invent the tesla car, nor did he even found the company) — a man who has produced nothing aside from orchestrating coups and launching cars into space, a car which will remain, by the way, floating as literal trash out in the athmosphere as a reminder of his own place in the annals of history — of course a man with all the money in the world but none of the intelligence or patience or humility to put creativity into action, would think generative AI is a good investment.

as doc burford puts it:

The problem with a great number of these AI fellas is that they think artists are important, because art — whether writing or drawing or anything else — can cut to our very heart and bring about emotions in a way that seems magical. So they think that we must be wizards of some kind, and if they could only just release content into the world, the way we do, then they would get all this magical acclaim they perceive us as having. …… It may seem magical, but it isn’t. It’s a skill, a skill that anyone can learn. You, reading this, maybe you aren’t a writer and want to be, or maybe you are a beginner, or maybe you’re just curious, or maybe you’re a pro. Whatever you are, it’s important to bear in mind: this is not magic, this is deliberate, skilled work that you are doing. …… The AI guys do not know anything about the craft — if they did, they’d just do the craft.

There is no such thing as an AI artist — there is only a client, asking a machine to make things based on their input.

the only people who think that AI generated “art” and “writing” are worthwhile and interesting concepts are those who have a fundamentally ignorant understanding of how art and writing are made. like everything else in a commodity-driven world, to a technocratic billionaire or feudal crypto lord, “art” is just another piece of content or commodity — another thing to collect or another object of sorts that demonstrates…something. this is why NFTs were so popular, aside from the fact that the vast majority of the uber wealthy are incredibly fucking stupid: to own something which is “unique” and “one of a kind” signifies some sort of relationship to the world, and if this “unique” and “one of a kind” item costs billions of dollars to purchase, your relationship to the world through its acquirement is even more grand and impressive. and so people bought NFTs, and people invest in AI art, and people continue to fundamentally move further and further from anything that might actually put them back into the realm of regular humanity. but owning the thing isn’t the point. the thing as a finished product - whether it’s a fraudulently marketed constellation of pixels on a screen or a stupid fucking jeff koonz sculpture - isn’t even the point. the ownership of said art is the least important part of the entire equation, one that could actually be removed from the entire making and creating process and have zero negative impact on the way that things are made and created.

the point of art is that you are actually supposed to make it, and engage with it, and that whether you experience the thing as the viewer or reader — its own form of engagement — or you are the one actually crafting and sculpting the thing, your own humanity must by necessity be stirred and engaged in the process. that is the whole fucking point of making anything. that is the whole fucking point of existing.

but if you cannot make anything — if you have become so corrupt that you wash your hands with the blood of bolivian political activists and miners — if you are for nothing except for your own ego and for material accumulation — if you are so detached from the reality of why people make anything at all for any reason other than for a profit motive, then of course you will see art as an object, and of course you will have no real appreciation or understanding for its place in the human heart, and then of course you will think funding different generative AI programs to produce things that approximate what a non-thinking, non-feeling human being might identify as art is a grand old idea. and if you’re particularly stupid, you’ll even think that idea is worth billions.

but just because something is incredibly stupid doesn’t mean it can’t also be incredibly dangerous. musk himself, in fact, has proven quite the opposite — that the more insulated, unwilling to learn, and ego-driven a person is, and the more stupidly he behaves, the more damage he is capable of creating.

“Practicing an art, no matter how well or badly, is a way to make your soul grow, for heaven's sake. Sing in the shower. Dance to the radio. Tell stories. Write a poem to a friend, even a lousy poem. Do it as well as you possibly can. You will get an enormous reward. You will have created something.”

kurt vonnegut, a man without a country

but how can you become richer than god and still remain intact with any of your latent humanity?

you can’t.

you cannot rob the rest of the world of food, and medicine, and justice, and commit unspeakable acts of violence, and deliberately and consciously disenfranchise and mutilate the lives of millions through your wealth accumulation — even if you never personally orchestrate a coup, even if you never lift a finger, even if your business career doesn’t have a larger and more destructive environmental footprint than the entire economic output of entire nation-states — even if you commit no overt violence, your simple hoarding is in and of itself an unspeakable act of cruelty.

how can anyone live like that and still have any idea of what might be the point of making actual art?

how can anyone live like that and still have any idea what might be the point of anything at all?

For the still-not-convinced: If you need an AI to come up with ideas for you, you don’t even belong in the fucking room, because ‘coming up with ideas’ is literally the most basic level skill to have. A basketball player who can’t dribble doesn’t belong in the NBA, so why the fuck do you think you deserve a spot on my writing team if you need a computer to do what any goddamn fucking eight year old can do? Take the fucking hint, you fucking fraud: if you need AI to do the most basic tasks required of you as a writer, then you aren’t employable as a writer. Period. Fuck you. You’re a waste of everyone’s time.

Doc Burford

the manic pixie dream girl’s guide to existential angst is a sometimes free newsletter (and the occasional poem) from joelle schumacher. if you enjoy their work or would like to support it, you can become a paid subscriber, and/or subscribe to or write in to their advice column (but preferably and).

if you’re in the denver area, come to a writing workshop.